Hearing people often find it difficult to master the grammatical nuances of sign language, including hand and finger coordination, facial expressions, head positions, and mouth shapes. Get one element wrong, and the message will often be misunderstood. Some people may think that the deaf would be able to cope perfectly well with written communication or text messaging. Professor Felix Sze, Deputy Director of CUHK’s Centre for Sign Linguistics and Deaf Studies (CSLDS), begs to differ.

She thinks that written communication poses a huge challenge to the deaf. ‘The Chinese sentence “I have no book” becomes “I book no have” in sign language. To require the deaf who are no native readers and writers of Chinese to follow Chinese grammar is like asking a Chinese-speaking person to express herself in Chinese, while following English syntax.’ Hence, an understanding of the communication needs of the deaf becomes a prerequisite for a truly inclusive society.

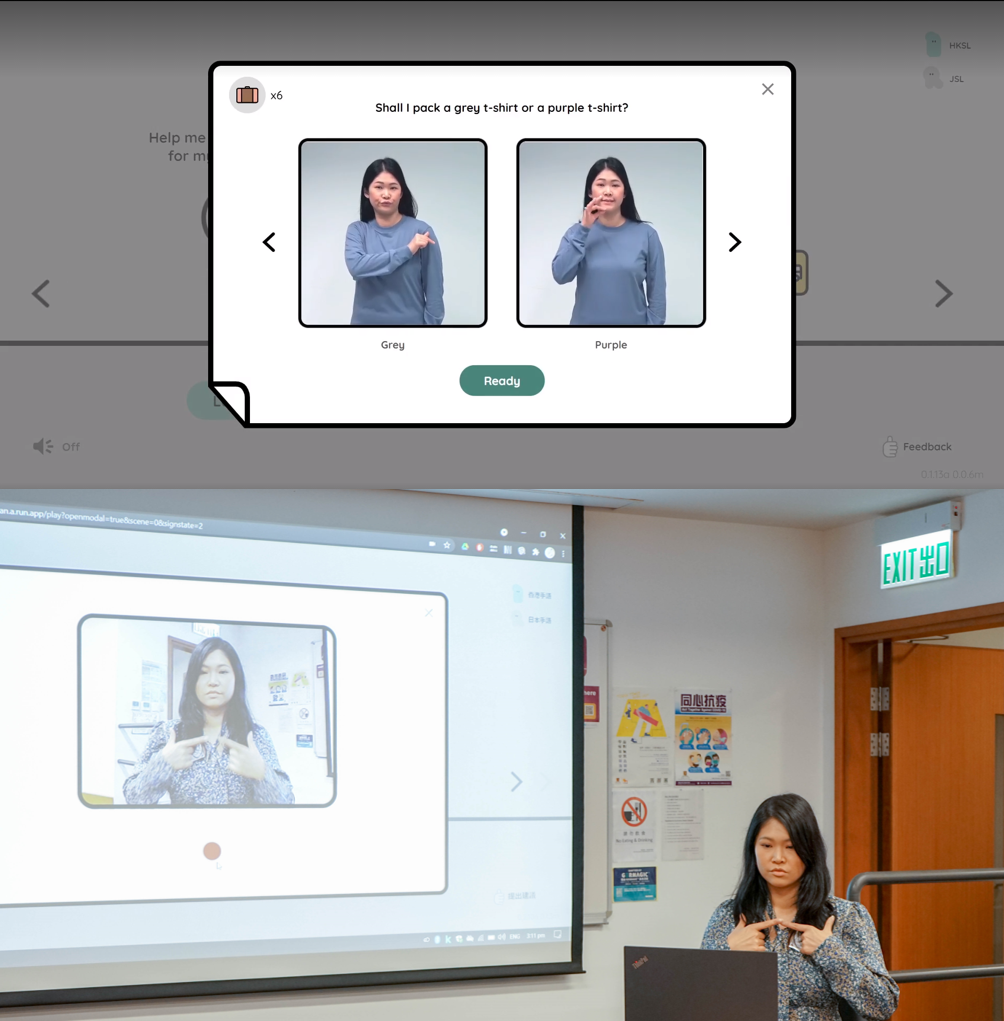

Immersive game for sign language learners

An initiative of Project Shuwa, SignTown has been made possible by international cross-disciplinary collaboration between CUHK, Google and Japanese counterparts, with the aim of bridging the gap between the deaf and hearing worlds with advanced technology. ‘Shuwa’ (‘手話’ in Japanese characters) means ‘sign language’. The game was launched on 23 September 2021, the International Day of Sign Language.

AI-based movement recognition

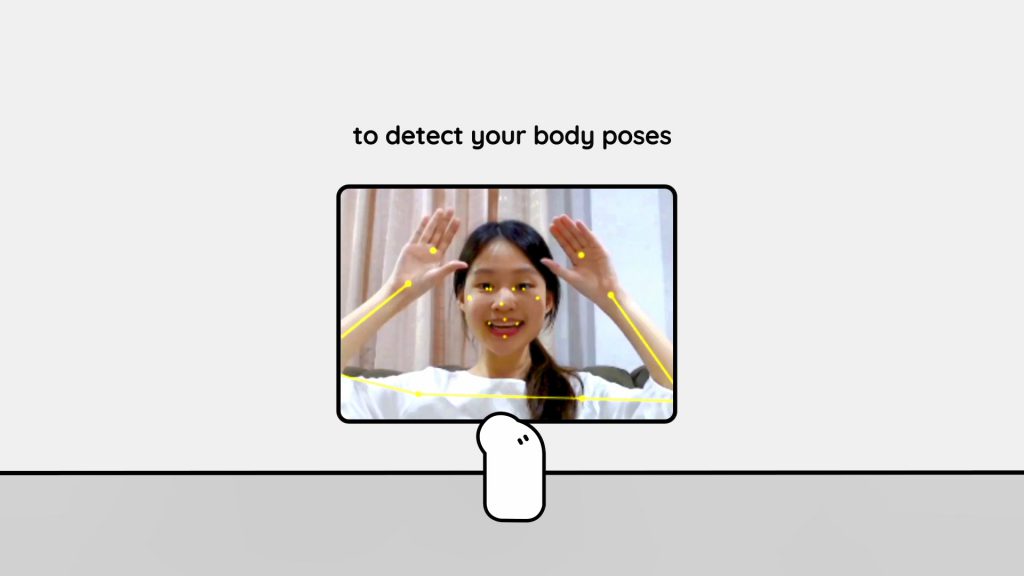

While sign languages vary from one country to another, phonetic features such as handshapes, orientations and movements are universal, and the number of possible combinations is finite. Therefore, recognition models are possible. Recognising sign language, including hand and body movements, requires advanced equipment such as special 3D cameras and gloves with sensors, making it hard to popularise the technology.

Project Shuwa has acknowledged these pain points by constructing the first machine learning model that uses a computer camera to recognise, track and analyse 3D hand and body movements as well as facial expressions. Currently, players can choose between Japanese and Hong Kong sign languages. More options will be available in the future.

The role of CSLDS in Project Shuwa is to provide sign linguistic knowledge and features of Hong Kong sign language to develop the machine learning model. ‘As sign linguists, we want to help our collaborators to develop a sign language recognition tool which is smart enough to tell whether the players are making accurate signs,’ said Professor Sze.

The future goal of the project is to build a sign dictionary that not only incorporates a search function, but also provides a virtual platform to facilitate sign language learning and documentation based on AI. The project team aspires to develop an automatic translation model that can recognise natural conversations in sign language and convert them into spoken language using the cameras of commonly used computers and smartphones.

Professor Katsuhisa Matsuoka, Director of the Centre for Sign Language Studies at Kwansei Gakuin University, said, ‘It is a great pleasure for our centre to be involved in the technical development of Project Shuwa. We hope in the future to be able to develop expert versions of learning tools for medical and legal professionals. These are all worth exploring.’

Our Pledge to Mother Earth